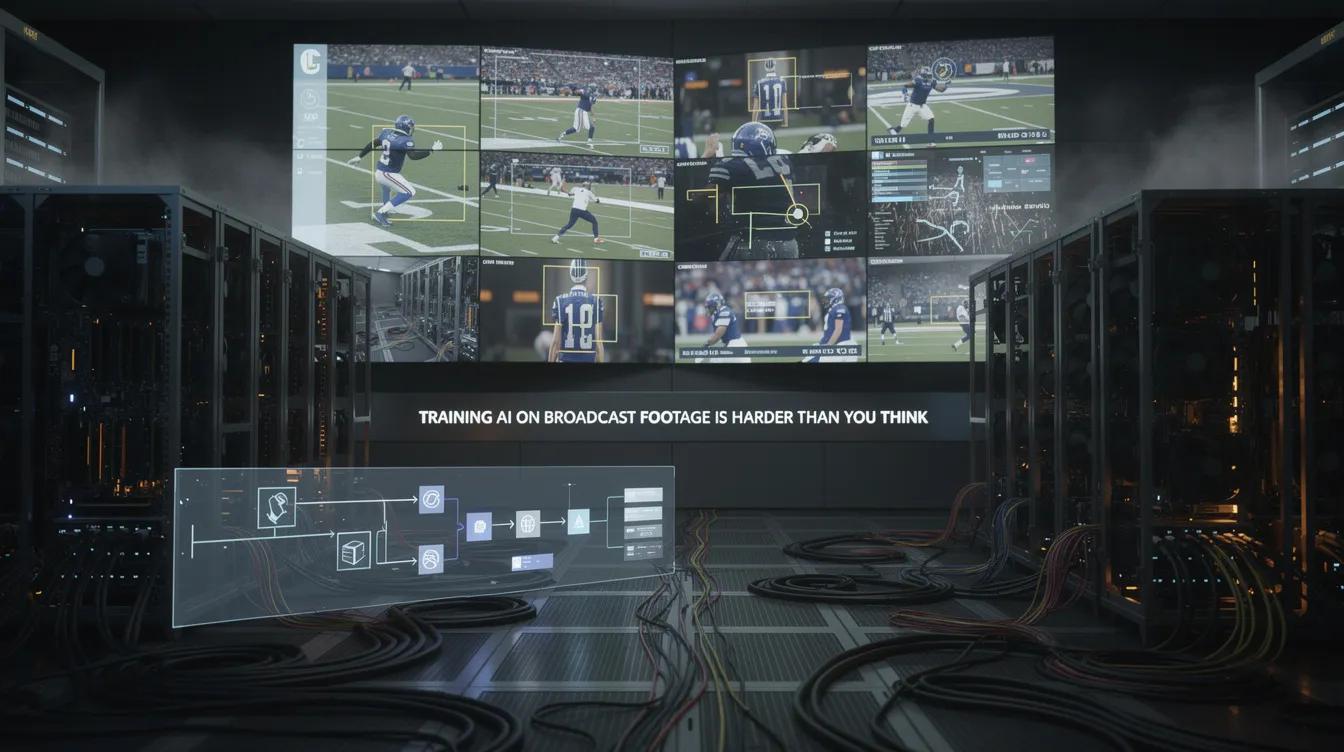

Training AI on Broadcast Footage Is Harder Than You Think

Generic computer vision works great on clean data. Real broadcast feeds — with overlays, replays, ad breaks, and camera cuts — are a different challenge entirely.

Most AI demos in sports show a clean, high-angle camera view with tracking overlays added on top. It looks impressive. But real broadcast footage is nothing like that, and the gap between demo and production is where most sports AI projects fail.

The Messy Reality of Broadcast Feeds

A live broadcast feed includes: constant camera cuts (sometimes every 3-5 seconds), graphics overlays (scoreboards, tickers, sponsor logos), replay insertions that look like live action, ad breaks that interrupt the feed, slow-motion replays at varying speeds, and picture-in-picture compositions.

A computer vision model trained on clean overhead footage will struggle with all of this. It needs to understand not just 'what's happening in the game' but 'what is the broadcast showing me right now' — and those are fundamentally different questions.

Why Generic Models Fail

Off-the-shelf object detection models can identify a football on a clean pitch. But they can't reliably distinguish between a live goal and a replay of that same goal being shown 30 seconds later. They can't tell the difference between a real corner kick and a graphic showing corner kick statistics.

Sport-specific context matters enormously. A model that works for football won't automatically work for cricket or basketball. Camera positions are different, key events look different, the rhythm of the game is different. Each sport needs dedicated training data and model architecture.

The Data Problem

Getting good training data is the hardest part. You need thousands of hours of labelled broadcast footage — not just 'there's a ball here' but 'this is a goal scored at minute 37 from open play, shown from the main camera, with this specific broadcast overlay configuration.'

This kind of labelled data doesn't exist in public datasets. It has to be created in partnership with actual broadcasters, using real production output. This is one of the reasons domain experience matters so much — you need to know what to label and why.

RISE's Approach

RISE trains its models specifically on broadcast output, not raw game footage. The models learn to understand the broadcast as a production — recognising camera cuts, filtering out replays, reading the scoreboard, and correlating on-screen events with their significance in the game.

This is slower and more expensive than fine-tuning a generic model, but it's the only approach that works reliably in a live production environment where mistakes are immediately visible to millions of viewers.